Issues on VR

Virtual reality (VR) can serve engineers and designers as a practical tool for powerful simulation. While trials of modeling and simulating the driver behavior have been carried out for years, drivers' cognitive and mental behavior aspects should be investigated more thoroughly under various driving conditions. We are working to clarify experimentally how changes in various aspects such as speed, vehicle width, and lane change patterns may impact on driver behaviors in a task of avoiding barriers on the road. The results showed that those changes generated differences in the timing of responses as well as vehicle position on the road. Furthermore, we have explored the effectiveness of head movement as an interaction technique for steering control in VR.

Virtual reality (VR) can serve engineers and designers as a practical tool for powerful simulation. While trials of modeling and simulating the driver behavior have been carried out for years, drivers' cognitive and mental behavior aspects should be investigated more thoroughly under various driving conditions. We are working to clarify experimentally how changes in various aspects such as speed, vehicle width, and lane change patterns may impact on driver behaviors in a task of avoiding barriers on the road. The results showed that those changes generated differences in the timing of responses as well as vehicle position on the road. Furthermore, we have explored the effectiveness of head movement as an interaction technique for steering control in VR.

Fluid Interface

Nowadays computers are considered a common tool for everyday activities, including document management and message communication. This in turn requests us to carefully design computers so that anyone can use them without any special skill.

Nowadays computers are considered a common tool for everyday activities, including document management and message communication. This in turn requests us to carefully design computers so that anyone can use them without any special skill.

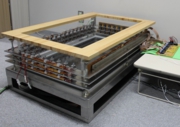

We developed a multidip interface which uses water as a medium of interaction and makes use of total internal reflection as a technique for effectively sensing hand gestures under water in a tub. The user can communicate with the system by not only a touch on water but motion in depth direction. The system consists of a transparent acrylic tub that is filled with water, video cameras, a video projector, and a PC. Virtual objects generated by the PC can be projected onto water in the tub by using the projector. The projected objects appear at the bottom of the tub but are seen as if they are floating on the water.

Since the use of a camera requires a larger space in system setup, we have presented a framework of using pairs of a laser and a phototransistor for detection and tracking of objects in the water, as shown in the figure on the right. A display monitor is mounted under an acrylic water tank. The system could be used for development of interactive foot bath, or called Ashiyu, that is one of the common activities in Japan.

Since the use of a camera requires a larger space in system setup, we have presented a framework of using pairs of a laser and a phototransistor for detection and tracking of objects in the water, as shown in the figure on the right. A display monitor is mounted under an acrylic water tank. The system could be used for development of interactive foot bath, or called Ashiyu, that is one of the common activities in Japan.

Gait Tracking and Analysis

Powerful and novel gestural interfaces have attracted considerable interest, and various systems and tools have been developed. Microsoft Kinect is a good example. However, it is required that the user stays in a limited range in demonstration of gestures. If we think of the fact that the ability to walk upright is an evolutionary step forward and one of the hallmarks of being human, it is worth considering gestures with movement on the floor, or human gait in practical situations.

Powerful and novel gestural interfaces have attracted considerable interest, and various systems and tools have been developed. Microsoft Kinect is a good example. However, it is required that the user stays in a limited range in demonstration of gestures. If we think of the fact that the ability to walk upright is an evolutionary step forward and one of the hallmarks of being human, it is worth considering gestures with movement on the floor, or human gait in practical situations.

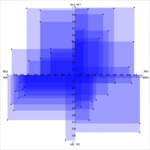

We have been investigating footstep tracking of individuals during walking on a floor sensor device. The system is capable of identifying pairs of footsteps of walking individuals on the device in real time. In addition, we implemented a mechanism of estimating the lower body motion of a walking person, where inverse kinematics (IK) is applied to compute the joint angles in a body model. The framework could be applied into rehabilitation, home security systems, and digitall signage.

We have been investigating footstep tracking of individuals during walking on a floor sensor device. The system is capable of identifying pairs of footsteps of walking individuals on the device in real time. In addition, we implemented a mechanism of estimating the lower body motion of a walking person, where inverse kinematics (IK) is applied to compute the joint angles in a body model. The framework could be applied into rehabilitation, home security systems, and digitall signage.

Interactive Augmented Reality

One view of the future of computing that has captured our interest is augmented reality (AR), in which computer generated virtual imagery is overlaid on everyday physical objects. In fact, AR is not a buzzword anymore and many trials of demonstrating AR systems/applications can be seen in YouTube and other video sharing websites as well as technical publications.

We first developed an AR system in which a transparent display was used as a means of integration of the real world and the virtual world. The system has been extended so that it can be used in a collaborative working environment, and several applications have been demonstrated to explain the usefulness of the system.

We first developed an AR system in which a transparent display was used as a means of integration of the real world and the virtual world. The system has been extended so that it can be used in a collaborative working environment, and several applications have been demonstrated to explain the usefulness of the system.

Now we have been developing a handheld AR system which allows the user to interact with a virtual object in AR space based on the understanding that both real and virtual objects should be compatible in manipulation. We also developed a system for authoring animations of virtual objects in AR-based 3D space. Manipulation of the virtual character being superimposed on a maker is carried out by a finger in front of a camera at the backside of the mobile phone. This scheme helps the user have a feel of handling it directly within the augmented 3D space.

Now we have been developing a handheld AR system which allows the user to interact with a virtual object in AR space based on the understanding that both real and virtual objects should be compatible in manipulation. We also developed a system for authoring animations of virtual objects in AR-based 3D space. Manipulation of the virtual character being superimposed on a maker is carried out by a finger in front of a camera at the backside of the mobile phone. This scheme helps the user have a feel of handling it directly within the augmented 3D space.

Adaptation of Learning Materials with Lerning Management System

Considering that one of the most intellectual activities humans can attain is learning/teaching, e-learning would become much more important in the coming years. We have carried out a project on adaptation of learning contents based on one's learning pattern aiming to increase his/her learning performance, and are developing a system in conjunction with Moodle learning management system. We also proposed a group learning map that visualizes the learning styles in a class, which can help instructors and learners achieve learning outcomes more effectively.

Considering that one of the most intellectual activities humans can attain is learning/teaching, e-learning would become much more important in the coming years. We have carried out a project on adaptation of learning contents based on one's learning pattern aiming to increase his/her learning performance, and are developing a system in conjunction with Moodle learning management system. We also proposed a group learning map that visualizes the learning styles in a class, which can help instructors and learners achieve learning outcomes more effectively.

Other Projects (pending and completed in last 10 years)

Auditory table system

Most of the computing systems which we have been using in common express information visually. It is obvious that vision plays an important role in interaction but is not the only channel. We focus on audition which is another important channel through which the user perceives his/her environment. We have started a project toward spatial presence and perceptual realism of sounds. A collaborative multimodal system has been implemented. A two-dimensional sound display called Sound Table is its central component, in which 16 speakers are mounted in a 4x4 array structure. Multiple users can share the sensation of "sounds are here and there". The video projector which is mounted over Sound Table is provided for presentation of visual information onto the table.

Most of the computing systems which we have been using in common express information visually. It is obvious that vision plays an important role in interaction but is not the only channel. We focus on audition which is another important channel through which the user perceives his/her environment. We have started a project toward spatial presence and perceptual realism of sounds. A collaborative multimodal system has been implemented. A two-dimensional sound display called Sound Table is its central component, in which 16 speakers are mounted in a 4x4 array structure. Multiple users can share the sensation of "sounds are here and there". The video projector which is mounted over Sound Table is provided for presentation of visual information onto the table.

Applications of the system to music mashup and reminiscence/life review have been demonstrated so far. We also presented a case study of using the platform in a classroom to enrich learning materials. The hundred waka poems by one hundred poets, called Hyakunin-Isshu, which is the famous Japanese poetry anthology and known as an intellectual game at home and school, is selected as a subject. Digital cards are spread out at random on the tabletop, where each of them has the second half of a poem. The user makes trials of taking a card to be matched with the first half of the poem that is read by a reciter (speech synthesis software). Auditory cue is given as well, as a hint at the position where the right answer is placed when the learner cannot find the one.

Visualization of time-series data

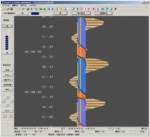

We first examined a technique of spiral-based visualization of time-series data. A simple linear timeline which has been used commonly doesn't support viewing periodic aspects of time-series data, though the periodicity is one of the important factors in those data. Interestingly, while the radius of a spiral is initially proportionate, target events belonging to a certain category can be aligned - resulting in a non-uniform spiral. This can help the user find a temporal pattern in data.

We first examined a technique of spiral-based visualization of time-series data. A simple linear timeline which has been used commonly doesn't support viewing periodic aspects of time-series data, though the periodicity is one of the important factors in those data. Interestingly, while the radius of a spiral is initially proportionate, target events belonging to a certain category can be aligned - resulting in a non-uniform spiral. This can help the user find a temporal pattern in data.

We have developed a computer tool for patients on dialysis with aid of information and communication technologies. A patient can access his/her own record of blood analysis results through a mobile phone, where its visualization is simplified and customized for those patients. Furthermore, the system adopts some other techniques for helping them to spend better life.

We have developed a computer tool for patients on dialysis with aid of information and communication technologies. A patient can access his/her own record of blood analysis results through a mobile phone, where its visualization is simplified and customized for those patients. Furthermore, the system adopts some other techniques for helping them to spend better life.

Idea generation

We have developed a system which helps the user to recall something important (e.g., a birthday, anniversary, appointment, and homework assignment) or to have ideas in general by giving a visual clue to him/her. The information presented to the user may not be the one that he/she is looking for, but is the one which would inspire the user with a matter of concern.

We have developed a system which helps the user to recall something important (e.g., a birthday, anniversary, appointment, and homework assignment) or to have ideas in general by giving a visual clue to him/her. The information presented to the user may not be the one that he/she is looking for, but is the one which would inspire the user with a matter of concern.

The figure on the right shows an interface of the system where LoCoS symbols (originally proposed by Prof. Ohta) are used as visual elements. Each of the symbols moves independently of others. An important point is that, as you can see in the figure, the symbols are transparent. When two or more symbols are overlapped on the screen, they are merged graphically, resulting in a new symbol. As the result, we have a chance of getting an infinite number of symbols. For example, the user may associate a combination of two symbols at the upper position with an idea “I should go to a beauty parlor” or a combination of three symbols at the bottom with “Let’s buy a slider as a birthday present to my grandson”. An experiment showed that the use of the system improved the capability of recalling.

Media understanding and enhancement

We were interested in how media convey the information and affect people - the way of their understanding and thinking. There have been a variety of research trials on media in, for example, computer vision, speech understanding, and artificial intelligence. But those researchers focus on analysis of a certain media type (i.e., text, image, video, and sound). Less study on media themselves or interdependency among media entities has been done. We investigated a mechanism of media understanding. As a very first step, we have proposed a scheme of generating a sequence of scenic pictures from a narrative text. The input text is first decomposed into segments, each of which corresponds to paragraph(s) to be printed on a separate page in a picture book. Key characters are extracted by referring to a pattern of their appearances in the segments. Finally an image is generated to each of the segments by arranging key characters on a stage and determining the position and pose of a camera accordingly.

We were interested in how media convey the information and affect people - the way of their understanding and thinking. There have been a variety of research trials on media in, for example, computer vision, speech understanding, and artificial intelligence. But those researchers focus on analysis of a certain media type (i.e., text, image, video, and sound). Less study on media themselves or interdependency among media entities has been done. We investigated a mechanism of media understanding. As a very first step, we have proposed a scheme of generating a sequence of scenic pictures from a narrative text. The input text is first decomposed into segments, each of which corresponds to paragraph(s) to be printed on a separate page in a picture book. Key characters are extracted by referring to a pattern of their appearances in the segments. Finally an image is generated to each of the segments by arranging key characters on a stage and determining the position and pose of a camera accordingly.

Searching the web based on visual structures

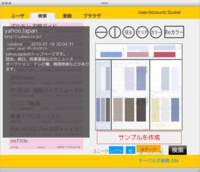

Searching the information on the web is one of the daily activities. Effective and efficient search on the web is a key challenge. We proposed a system for web search based on the visual structure (layout and colors) of web pages. Considering that interpretation of visual structures depends on individual perceptual characteristics, the creator and the searcher both participate in a session of expressing their own interpretation of layout and colors of a target web page. Web search is carried out by evaluating similarities between those visual page structures. This search scheme will help people to organize richer social networks with consideration of user's sensibility and preference to visual structural features.

Searching the information on the web is one of the daily activities. Effective and efficient search on the web is a key challenge. We proposed a system for web search based on the visual structure (layout and colors) of web pages. Considering that interpretation of visual structures depends on individual perceptual characteristics, the creator and the searcher both participate in a session of expressing their own interpretation of layout and colors of a target web page. Web search is carried out by evaluating similarities between those visual page structures. This search scheme will help people to organize richer social networks with consideration of user's sensibility and preference to visual structural features.

Video editing based on camera motion and object movement

Videos are active and rich media which are enjoyable for the people. Recent advancement of digital technologies, specifically the development of consumer digital video camcorders and mobile phones with camera, makes us possible to take videos with ease. This then requests us to have a facility of editing videos which have been taken, so as to fit to their preference. While many video editing software are available, it is allowed in most cases just to cut a video into segments and arrange them along a timeline.

Videos are active and rich media which are enjoyable for the people. Recent advancement of digital technologies, specifically the development of consumer digital video camcorders and mobile phones with camera, makes us possible to take videos with ease. This then requests us to have a facility of editing videos which have been taken, so as to fit to their preference. While many video editing software are available, it is allowed in most cases just to cut a video into segments and arrange them along a timeline.

We first proposed a new editing scheme allowing the user to specify desirable camera motions, specifically pan, tilt, and zoom, to a video shot after the shot has been taken. Next we extended the system so that selected objects are placed and kept at the center of the frames to make the resultant video more attractive.

We first proposed a new editing scheme allowing the user to specify desirable camera motions, specifically pan, tilt, and zoom, to a video shot after the shot has been taken. Next we extended the system so that selected objects are placed and kept at the center of the frames to make the resultant video more attractive.

Introducti...

Introducti...